Disclaimer:

I am not a lawyer and nothing in this post is (or should be construed as) a legal opinion, legal advice, or legal guidance. If you need legal assistance please contact a fully licensed, certified, and authorized legal professional. No specific government, public, or private organization is being identified in this article. Any similarities or likenesses in this article to any government, public, or private organization are incidental and not intended and do not constitute any suggestion that the organization is engaged in the practices described in this article. Any criminal acts by any entity, person, or organization is strictly forbidden and not condoned by this article. This article is not intended to provide guidance on the practices described herein and any entity that utilizes these practices does so without explicit or implied support, authorization, knowledge, consent, or association. Now onto the voice biometrics article.

The Voice Biometrics Article:

Introduction:

We live in a society where money makes the laws. Whether congress is impotent because it benefits from that fact or because special interests and big business have used enough power and money to blind congress is unknown but it is probably a mix. Congress’s sterility towards privacy legislation and protection of citizens has provided governments, public, and private organizations the leeway to exercise great latitude in the development of tools that can be leveraged against humans for the purposes of financial advantage and mission progress. One such tool is voice biometric analysis. Using voice biometrics organizations can determine a person’s identity, sex, race, financial and social status, geographic place of residence (for most), emotional state, generate a risk profile, and many more. In this article we will discuss how some organizations may collect biometric voice data, how they may train their biometric voice artificial intelligence, and how they may use that artificial intelligence to abuse consumers and possibly break the law.

Collecting Voice Biometrics Data:

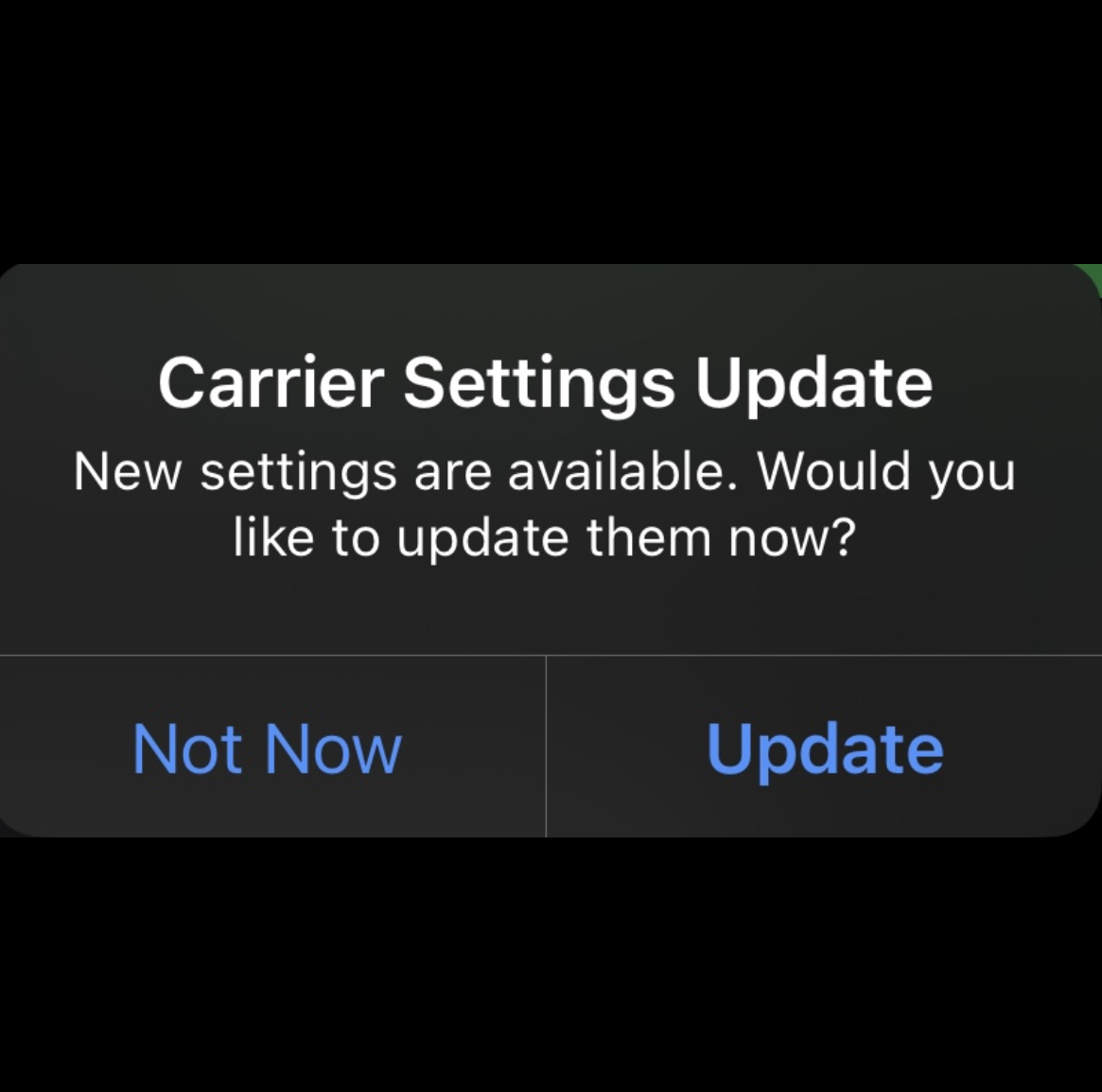

“This call may be monitored for quality and training purposes” is a phrase many people have heard when calling any number of organizations. The truth is the call will be monitored and there is no way for you or anyone else to “opt-out” or decline having your voice recorded because the “training” is training of Voice Biometrics Artificial Intelligence designed to molest your privacy. As your voice data is collected it is used to create a voice profile of you. The first objective of the voice profile is to ensure quick and accurate identification. Personal identification using voice biometrics is said to be in wide use across many organizations and is obscured and hidden by organization because it is a “legal grey area.” So, when you call the organization nearly instantly knows who you are bust still asks those “verification questions” to access your account. In some cases, the “verification questions” are used as training material for developing Voice Biometric Artificial Intelligence (VBAI) into detecting early warning indicators before it is weaponized put into production. The remainder of the call can also be used to train VBAI in the ways of determining sex, race, nationality, regionality, income level, religion, risk index, sexual orientation, stress levels, and many more highly invasive privacy shattering things. This is further assisted when a consumer agrees to take a survey to “rate the quality of service” they received.

Training Voice Biometrics Artificial Intelligence:

These surveys and constitute VBAI training flags that help associate speech patters, verbal mannerisms, verbal dialects, pitch and volume variances, and other audible indicators with how many time a person is likely to complain about a situation before potentially escalating or giving up, potential signs of escalation or giving up, potential signs of legal ramifications, and so forth. As these “surveys of quality assurance” and calls have been “monitored for quality and training purposes” have been performed for many years many organizations have vast treasure troves of training data and can build highly effective VBAIs without harvesting, buying, or collecting much more data. As there are no rules, laws, or regulations applicable to VBAI in existence or even planned to be considered in any part of the world every government, private, and public organization can continue to weaponize your own voice against your privacy to determine any health issues or other private information.

Weaponizing VBAI for Profit:

Once VBAI is put fully into production and weaponized against the consumer a government, public, or private entity may use it to determine any number of data points but the one most relevant to this article is the risk index. The risk index is the level of risk that is indicated by speech patterns, phraseology, voice pitch and volume fluctuations, verbal mannerisms, and emotional cues. Various industries have different risk index priorities. For example, a hospital or emergency services organization might have emotional state, intoxication indicators, and/or likelihood to commit acts of violence as top threat index priorities while a social media organization or bank may rate likelihood of litigation, financial status, regional location, and technical prowess as high risk index priorities. No matter the risk index priorities, the goal is to minimize exposure to risk by the organization while generating a revenue stream (for some). Let’s take an notional example.

Examples:

A notional consumer calls a customer service phone number to complain that the notional Amazom.con has created a product that infringes on a patented product the consumer sells on Amazom.con as an Amazom.con partner. The Amazom.con VBAI identifies the caller, identifies the caller is a minority from the midwest, is likely to be of lower income, and in unlikely to litigate or take action against Amazom.con. The representative informs the consumer their is nothing that can be done and the consumer has the right to sell the product through another venue. A second notional caller phones Amazom.con with the same complaint. This time the VBAI determines the caller is a wealthy, technical savvy, potential lawyer from the northern Virginia area. The representative transfers the call to the Amazom.con legal team and states to the consumer the transfer is to “a tier two representative”. The legal team assess the legal claims of the consumer and assisted by VBAI risk indexes chooses to continue infringing upon the patent or reach a mutually beneficial agreement with the consumer that will likely end in the consumer being undercut and out out of business.

Weaponizing VBAI for Government:

Yes, government bodies, agencies, and representatives have access to the same technologies. They can use VBAI for real time threat analytics to determine emotional state, honesty levels, levels of stress, health concern indicators, and a myriad of other things which can be used to “guide people” to taking a certain course of action, reduce threat postures, and respond to escalating situations appropriately. If a caller states a certain type of mass casualty device is located in a public area the VBAI can ascertain likely age, potential level of honesty, stress levels, and emotional state. If VBAI is known to be employed in government, commercial, or other buildings it can notify security of an unknown voice identity, a situation likely to result in physical violence, or correlate indicators to create an indicator of espionage or sabotage.

Conclusion:

All voice data is biometric data and there is absolutely no way to anonymize voice data because it is a biometric data point. Just like all other technologies it can be used to prevent real harm and danger. Likewise, as with all other technologies VBAI can (and may be) being leveraged as a weapon agains consumers to violate ethical business practices, potentially break laws, and harvest massive amounts of private data that is not accessible through other means. Congress has been proven many times it is impotent when it comes to the integrity of the voice biometrics privacy (or any other privacy) of its citizens. As VBAI can be (or may already be) weaponized against them as well will they will protect the privacy of their own ailing health, financial interests, private affairs, and pass some form of nation privacy legislation?